(Moving this to a separate issue for better relevance :) )

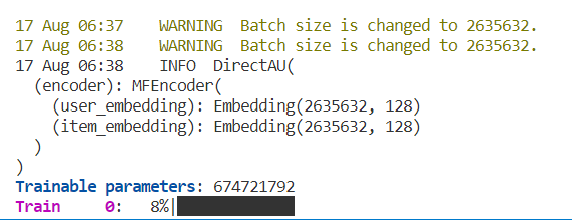

I see the following warning that Batch size is changed to 2635632 (number of users). Is this by design? I want to use a much smaller batch size so training is faster, as currently it take a very long time per epoch. (As torch.pdist in the uniformity loss is O(B^2) where B is the batch size)

How would you suggest enforcing this? (Currently, setting a batch size either as a command arg or in the config gets overwritten it seems)

Where in the code is this batch size re-set? (_batch_size_adaptation ?)

Are there any changes to the dataloader in this repository to the standard RecBole general dataloder

(Moving this to a separate issue for better relevance :) )

I see the following warning that Batch size is changed to 2635632 (number of users). Is this by design? I want to use a much smaller batch size so training is faster, as currently it take a very long time per epoch. (As torch.pdist in the uniformity loss is O(B^2) where B is the batch size)

How would you suggest enforcing this? (Currently, setting a batch size either as a command arg or in the config gets overwritten it seems)

Where in the code is this batch size re-set? (_batch_size_adaptation ?)

Are there any changes to the dataloader in this repository to the standard RecBole general dataloder