You can find the project Here. The API Docs for the DS portion can be found here Here

High level overview presentation.

Deep dive into cleaning the data.

| Scott Maxwell | Matthew Sessions | Luke Townsend | jimmy 'Zeb' Smith |

|---|---|---|---|

| Steven Reiss | Stephanie Miller | Amy NLe | Robert Tom |

|---|---|---|---|

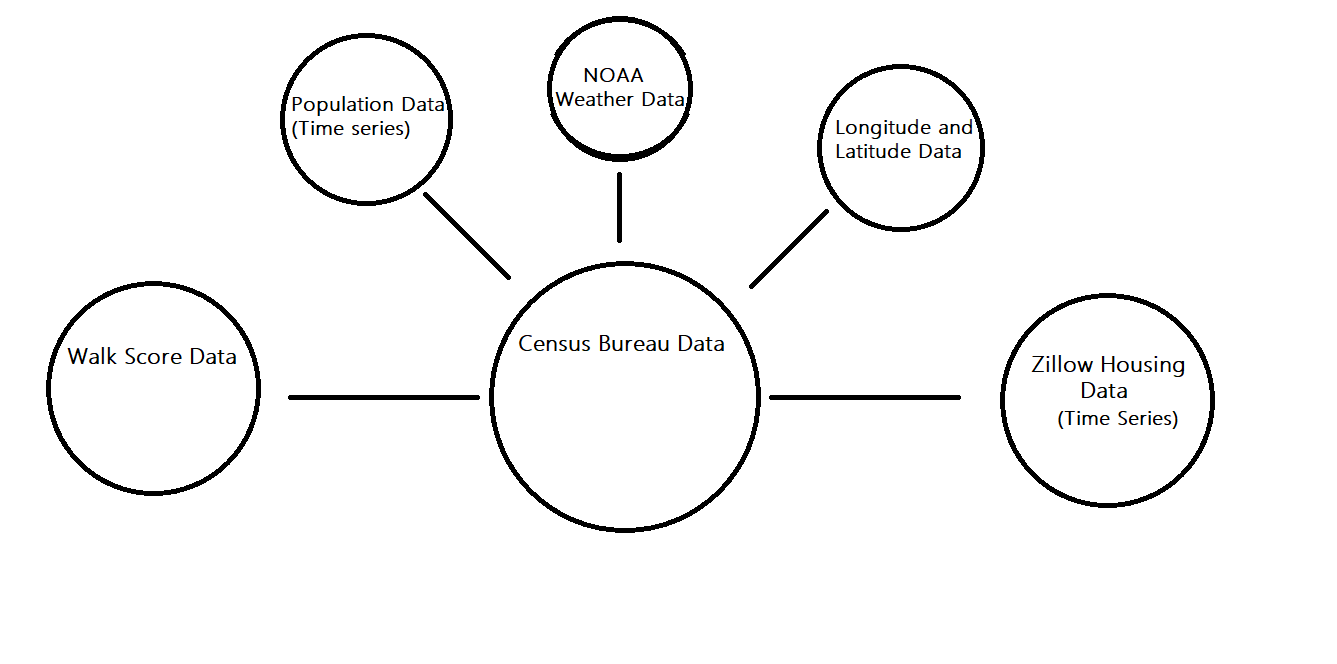

Citrics provides statistics on 28,925 different locations in the United States that are available for viewing. This was created with a team of web developers and data engineers. These statistics include information about housing prices, employment, industry, lifestyle and much more, sources are listed below.

- Python

- Flask

- Docker

- Jupyter Notebooks

- Mongo DB

- AWS Elastic Beanstalk/Amplify/S3/Route 53

- AWS PostgreSQL

The following models are using a K-Nearest Neighbors KD-Tree algorithm from the Scikit-Learn Python Library

Clicking on the links for each model will take you to the .py file for that model within this repo

Features & Metrics Used:

- Median Rent

- Occupants per room

- Housing by bedrooms

- Vacancy Rate

- Rent Pricing

- Historical Property Value

- Historical Property Value Growth by %

Features & Metrics Used:

- Industry Types

- Health insurance

- Salary

- Commute & travel time

- Retirement

- Unemployment

Features & Metrics used:

- Education

- Language

- Ethnicity

- Birth Rate

- Population

A recommendation questionnaire that supplies the user with a recommended city Features & Metrics used:

- Population

- Income

- Monthly Housing

- Temperature Preference

- Industry*

*Note that Industry is optional on the website, due to lack of adequate data for all cities within the database.

Features & Metrics used:

- Education

- Language

- Ethnicity

- Birth Rate

- Population

Features & Metrics used:

- Education

- Language

- Ethnicity

- Birth Rate

- Population

Note for Library Conflicts:

- AWS' Elastic Beanstalk has a hard time runing Numpy and Scipy. These libraries power Sklearn. Also, the joblib library had a hard time running models that were trained on different operating systems. Once we found models that worked, we exported the code to a python script and ran it on a Linux based machines running Python 3.6. We then used Docker/Dockerhub to contain and ship our flask app, and then connected it to AWS to test in Elastic Beanstalk. These steps allowed us to seamlessly deploy predictive models.

- Census Bureau

- Zillow Housing

- Longitude & Latitude

- Population Growth

- Weather Data

- Bureau of Labor and Statistics

Notes: How we pushed our data to MongoDB

You can find documentation for the API here