Langfuse on Spaces

This guide shows you how to deploy Langfuse on Hugging Face Spaces and start instrumenting your LLM application for observability. This integration helps you to experiment with LLM APIs on the Hugging Face Hub, manage your prompts in one place, and evaluate model outputs.

What is Langfuse?

Langfuse is an open-source LLM engineering platform that helps teams collaboratively debug, evaluate, and iterate on their LLM applications.

Key features of Langfuse include LLM tracing to capture the full context of your application’s execution flow, prompt management for centralized and collaborative prompt iteration, evaluation metrics to assess output quality, dataset creation for testing and benchmarking, and a playground to experiment with prompts and model configurations.

This video is a 10 min walkthrough of the Langfuse features:

Why LLM Observability?

- As language models become more prevalent, understanding their behavior and performance is important.

- LLM observability involves monitoring and understanding the internal states of an LLM application through its outputs.

- It is essential for addressing challenges such as:

- Complex control flows with repeated or chained calls, making debugging challenging.

- Non-deterministic outputs, adding complexity to consistent quality assessment.

- Varied user intents, requiring deep understanding to improve user experience.

- Building LLM applications involves intricate workflows, and observability helps in managing these complexities.

Step 1: Set up Langfuse on Spaces

The Langfuse Hugging Face Space allows you to get up and running with a deployed version of Langfuse with just a few clicks.

To get started, click the button above or follow these steps:

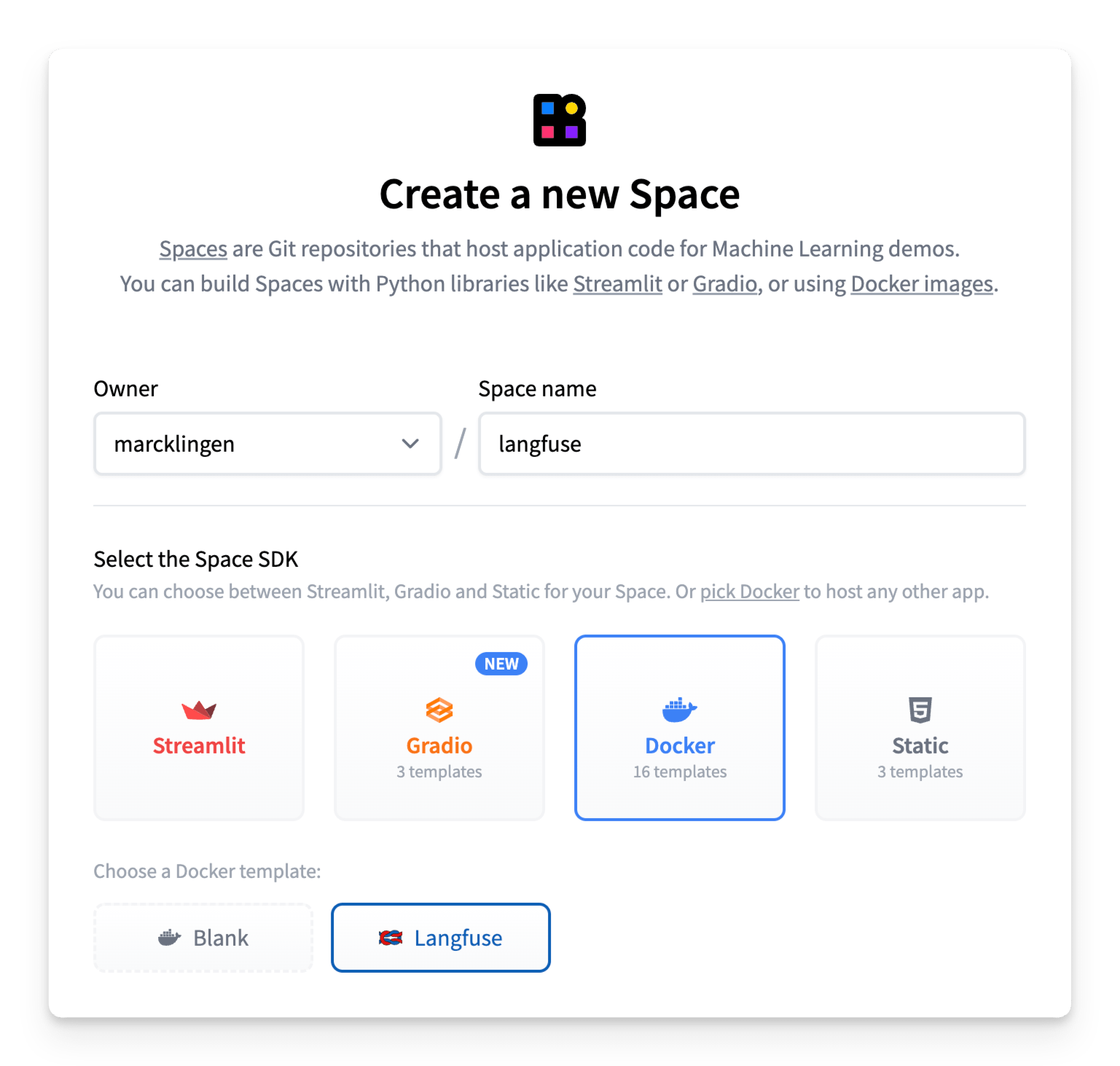

- Create a new Hugging Face Space

- Select Docker as the Space SDK

- Select Langfuse as the Space template

- Enable persistent storage to ensure your Langfuse data is persisted across restarts

- Ensure the space is set to public visibility so Langfuse API/SDK’s can access the app (see note below for more details)

- [Optional but recommended] For a secure deployment, replace the default values of the environment variables:

NEXTAUTH_SECRET: Used to validate login session cookies, generate secret with at least 256 entropy usingopenssl rand -base64 32.SALT: Used to salt hashed API keys, generate secret with at least 256 entropy usingopenssl rand -base64 32.ENCRYPTION_KEY: Used to encrypt sensitive data. Must be 256 bits, 64 string characters in hex format, generate via:openssl rand -hex 32.

- Click Create Space!

User Access

Your Langfuse Space is pre-configured with Hugging Face OAuth for secure authentication, so you’ll need to authorize read access to your Hugging Face account upon first login by following the instructions in the pop-up.

The Langfuse space must be set to public visibility so that Langfuse API/SDK’s can reach the app. This means that by default, any logged-in Hugging Face user will be able to access the Langfuse space.

You can prevent new users from signing up and accessing the space by setting the AUTH_DISABLE_SIGNUP environment variable to true. Be sure that you’ve first signed in & authenticated to the space before setting this variable else your own user profile won’t be able to authenticate.

Once inside the app, you can use the native Langfuse features to manage Organizations, Projects, and Users.

Note: If you’ve set the AUTH_DISABLE_SIGNUP environment variable to true to restrict access, and want to grant a new user access to the space, you’ll need to first set it back to false (wait for rebuild to complete), add the user and have them authenticate with OAuth, and then set it back to true.

Step 2: Use Langfuse

Now that you have Langfuse running, you can start instrumenting your LLM application to capture traces and manage your prompts. Let’s see how!

Monitor Any Application

Langfuse is model agnostic and can be used to trace any application. Follow the get-started guide in Langfuse documentation to see how you can instrument your code.

Langfuse maintains native integrations with many popular LLM frameworks, including Langchain, LlamaIndex and OpenAI and offers Python and JS/TS SDKs to instrument your code. Langfuse also offers various API endpoints to ingest data and has been integrated by other open source projects such as Langflow, Dify and Haystack.

Example 1: Trace Calls to HF Serverless API

As a simple example, here’s how to trace LLM calls to the HF Serverless API using the Langfuse Python SDK.

Be sure to first configure your LANGFUSE_HOST, LANGFUSE_PUBLIC_KEY and LANGFUSE_SECRET_KEY environment variables, and make sure you’ve authenticated with your Hugging Face account.

from langfuse.openai import openai

from huggingface_hub import get_token

client = openai.OpenAI(

base_url="https://api-inference.huggingface.co/v1/",

api_key=get_token(),

)

messages = [{"role": "user", "content": "What is observability for LLMs?"}]

response = client.chat.completions.create(

model="meta-llama/Llama-3.3-70B-Instruct",

messages=messages,

max_tokens=100,

)Example 2: Monitor a Gradio Application

We created a Gradio template space that shows how to create a simple chat application using a Hugging Face model and trace model calls and user feedback in Langfuse - without leaving Hugging Face.

To get started, duplicate this Gradio template space and follow the instructions in the README.

Step 3: View Traces in Langfuse

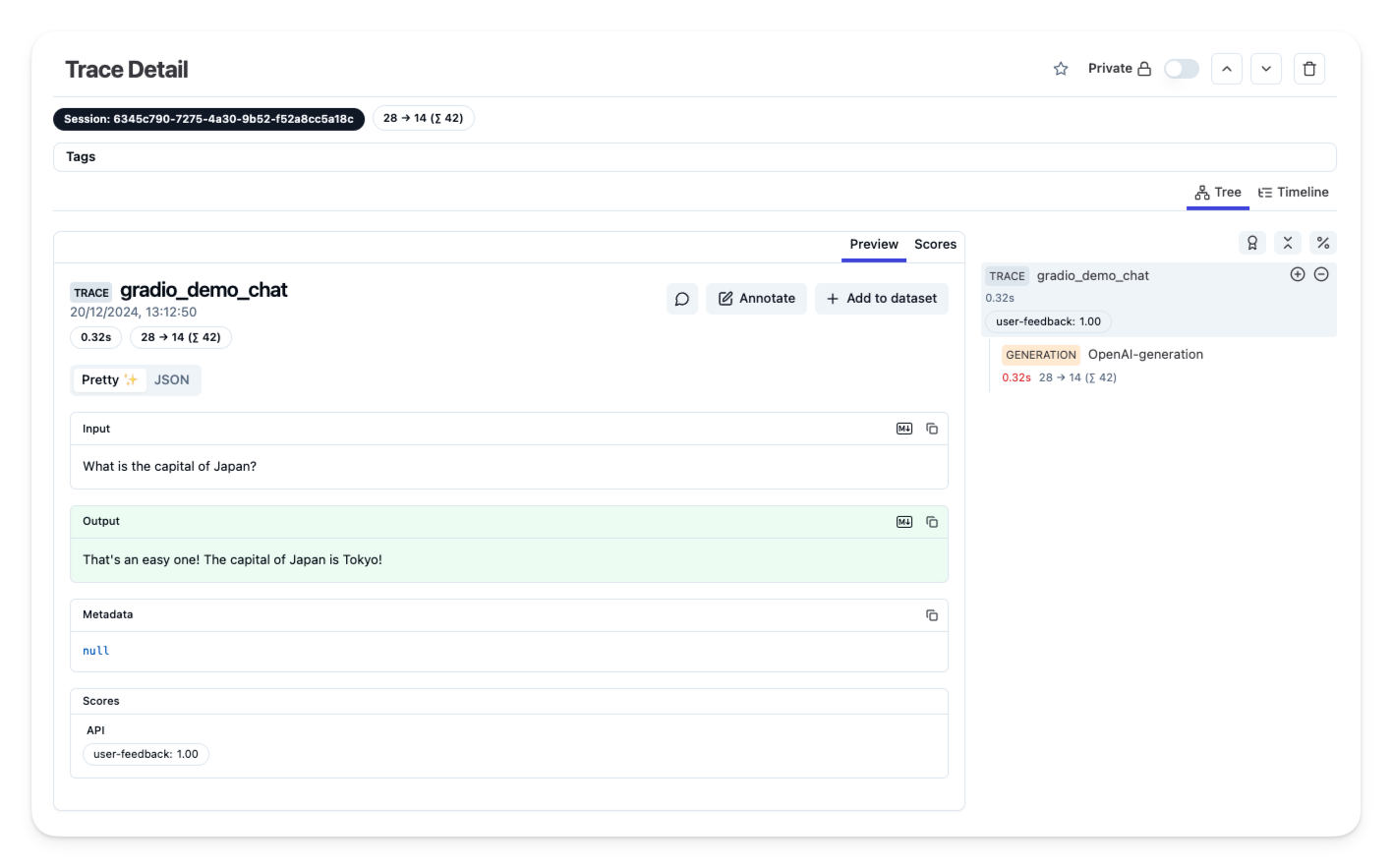

Once you have instrumented your application, and ingested traces or user feedback into Langfuse, you can view your traces in Langfuse.

Example trace in the Langfuse UI

Additional Resources and Support

For more help, open a support thread on GitHub discussions or open an issue.

< > Update on GitHub