This Repo Contains the work done by me in the self project Deep into CNN

-

First 2 Weeks Learned the basics of Regression , ML, Gradient Descent and completed few exercises on common dataset.

-

in the weeks 3 and 4 , Learned about Neural networks and pytorch framework, made some simple Neural network to classify data.

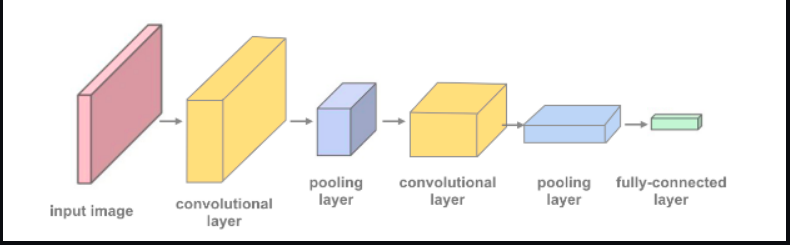

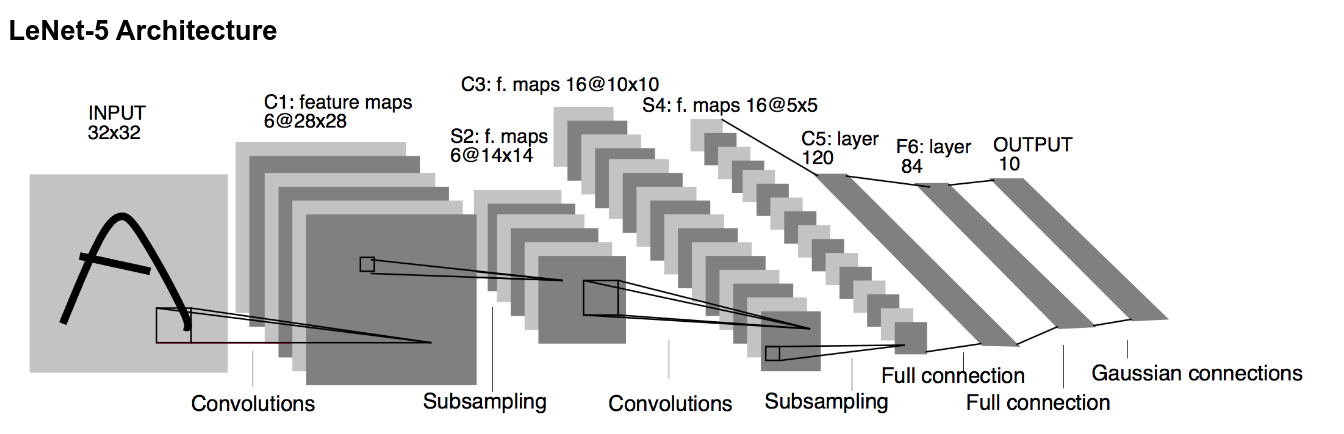

Implemented a simple CNN architecture using pytorch containing 2 Conv layer and 2 pooling layers + one fully connected layer to classify cifar dataset achieved an accuracy of 69% on it.

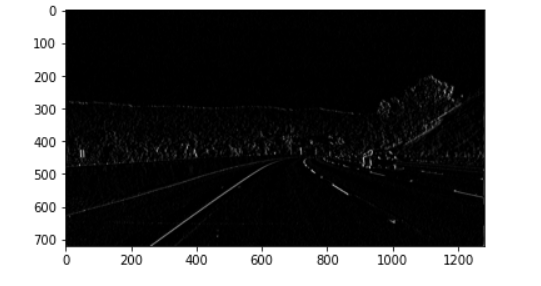

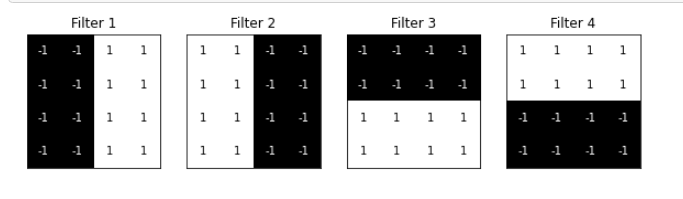

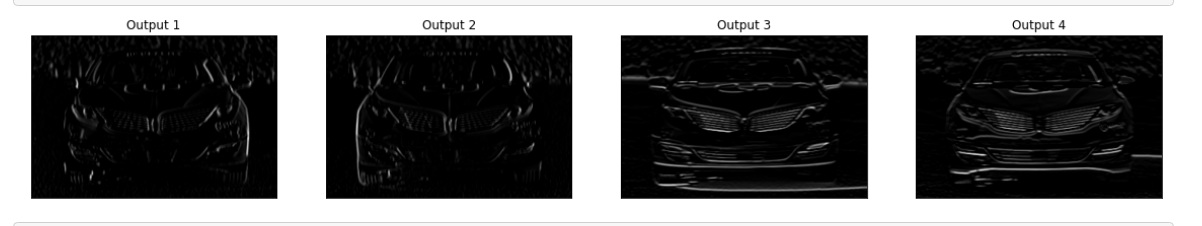

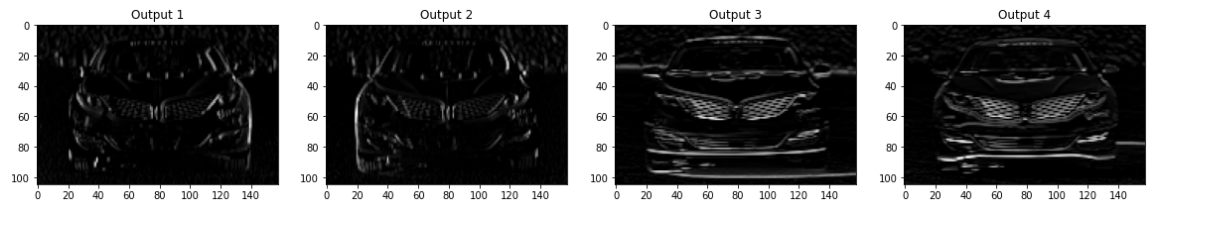

Then went on to Vislualize a CNN architecture , that what happens to input layer after maxpooling , convulutional , basically how do CNN are doing the magic

Using pytorch , and used binary cross entropy as loss function and SGD as optimizing algorithm trained it on MNIST dataset and then hypertuned the parameters.

- imported the CIFAR-10 dataset from the pytorch utilities.

- Then loaded it using transform library , augmented it . , splitted it into traning and testing parts.

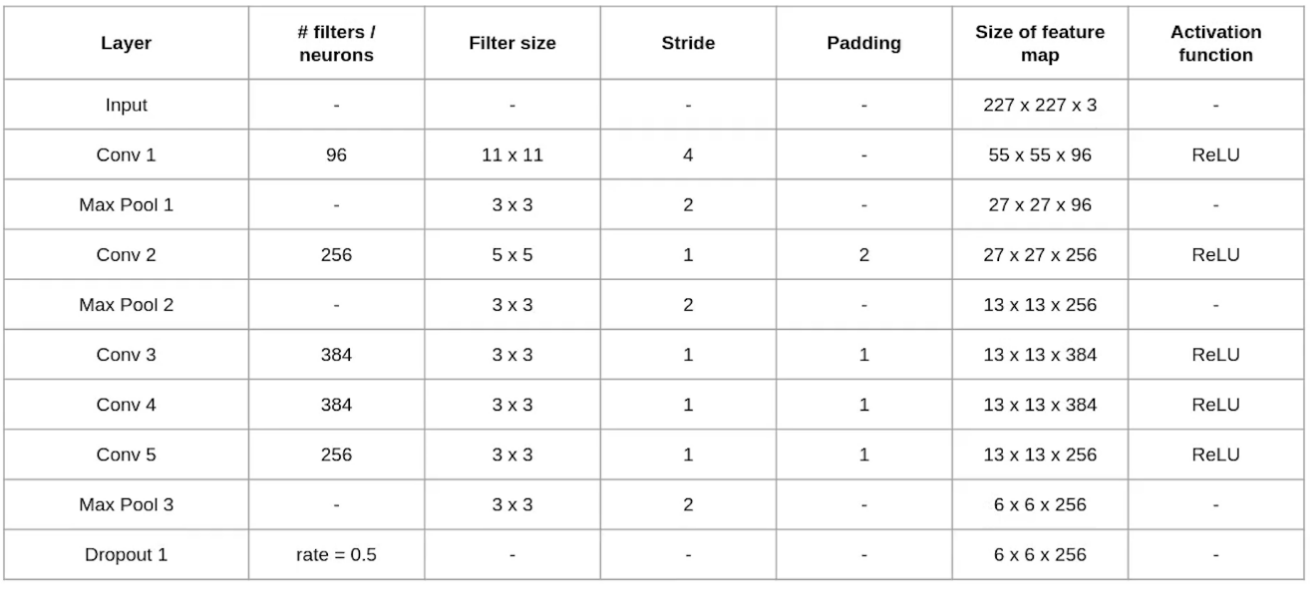

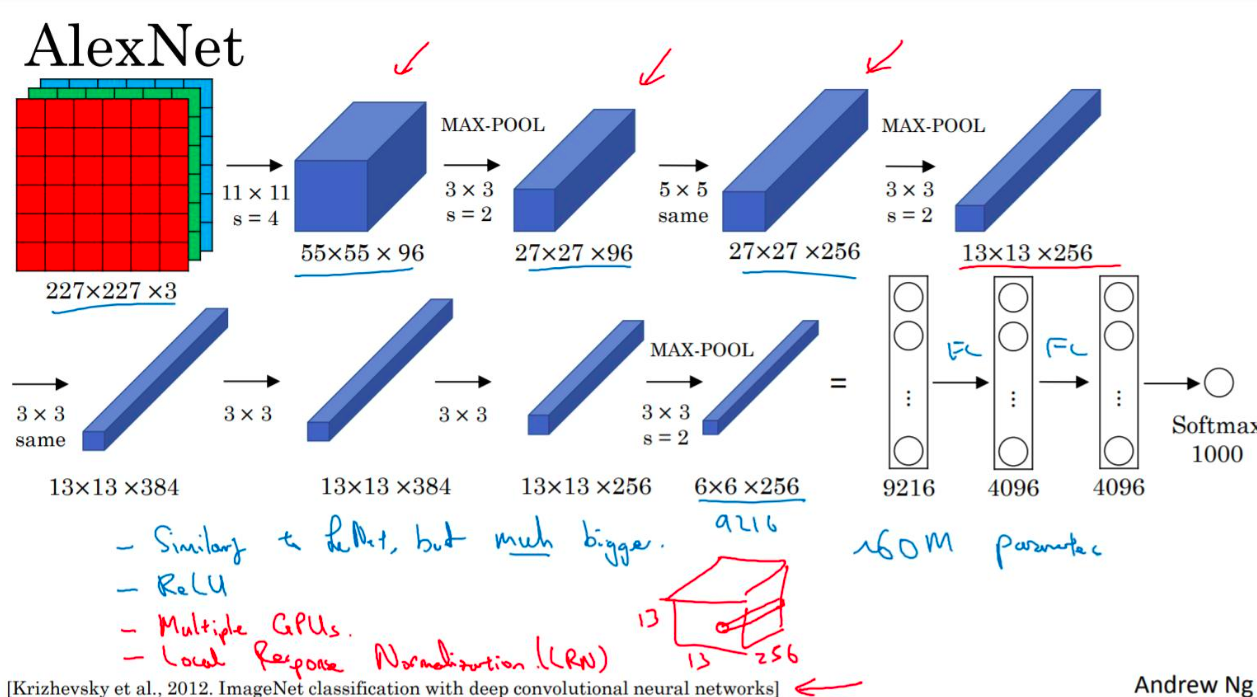

- Implemented the Main architecture of Alexnet

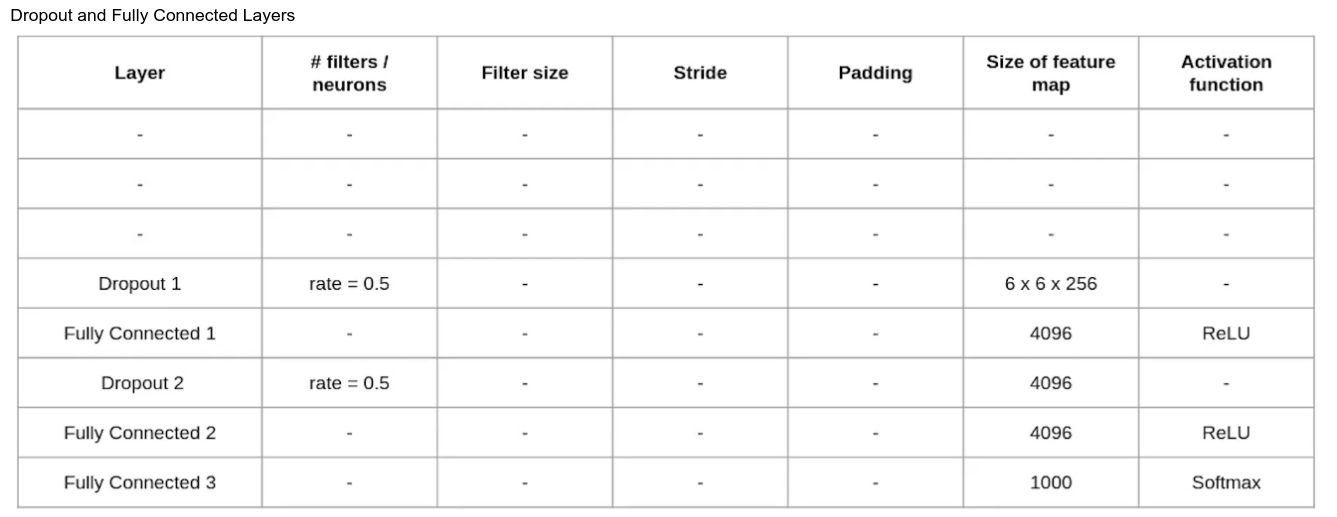

- Then wrote the Dropout and FC layers at the last. Dropout layers helps in reducing the complexity of the features.

- wrote the optimizer (SGD) and used binary cross entropy as the loss function . Then trained the model on Cifar datset and got the 89% accuracy on it .

- Local Setup (Use Conda : recommended)

https://jupyter.readthedocs.io/en/latest/install/notebook-classic.html https://docs.conda.io/projects/conda/en/latest/user-guide/install/index.html#installation - (Optional: Basic Python and libraries) https://duchesnay.github.io/pystatsml/index.html#scientific-python

- ( Optional : For those with very basic ml knowledge: Only 2.1-2.7) https://www.youtube.com/watch?v=PPLop4L2eGk&list=PLLssT5z_DsK-h9vYZkQkYNWcItqhlRJLN

- Linear Regression:

https://medium.com/analytics-vidhya/simple-linear-regression-with-example-using-numpy-e7b984f0d15e - Logistic Regression: https://towardsdatascience.com/logistic-regression-detailed-overview-46c4da4303bc

Find in NeuralNetIntro : W2-3.

- This one is highly recommended:

https://www.youtube.com/playlist?list=PLZHQObOWTQDNU6R1_67000Dx_ZCJB-3pi

Some more material (bit extensive, so be careful):

https://youtube.com/playlist?list=PLtBw6njQRU-rwp5__7C0oIVt26ZgjG9NI - Basic Backprop:

https://ml-cheatsheet.readthedocs.io/en/latest/backpropagation.html - Backprop (Mathematical Version):

https://mattmazur.com/2015/03/17/a-step-by-step-backpropagation-example/ - Softmax:

https://ljvmiranda921.github.io/notebook/2017/08/13/softmax-and-the-negative-log-likelihood/ - Pytorch(Skip the CNN part if you want for now):

https://pytorch.org/tutorials/beginner/basics/intro.html - Optional guide:

http://neuralnetworksanddeeplearning.com/chap1.html

Find in PyTorch : W2-3.